Xiaomi Pad 8 Pro: Entry Point into Android Tablet On-Device Ai & Local LLM

Table of Contents

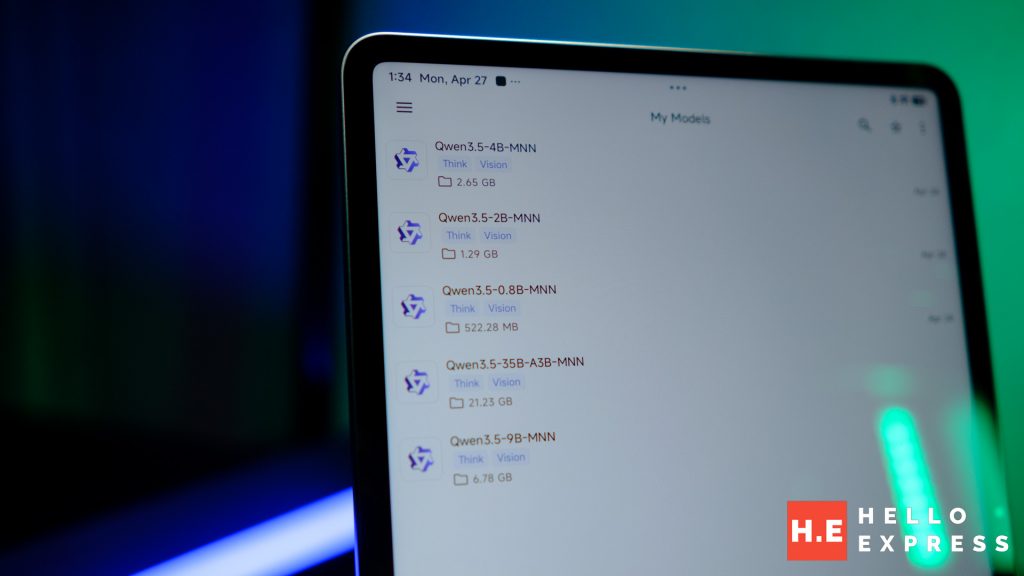

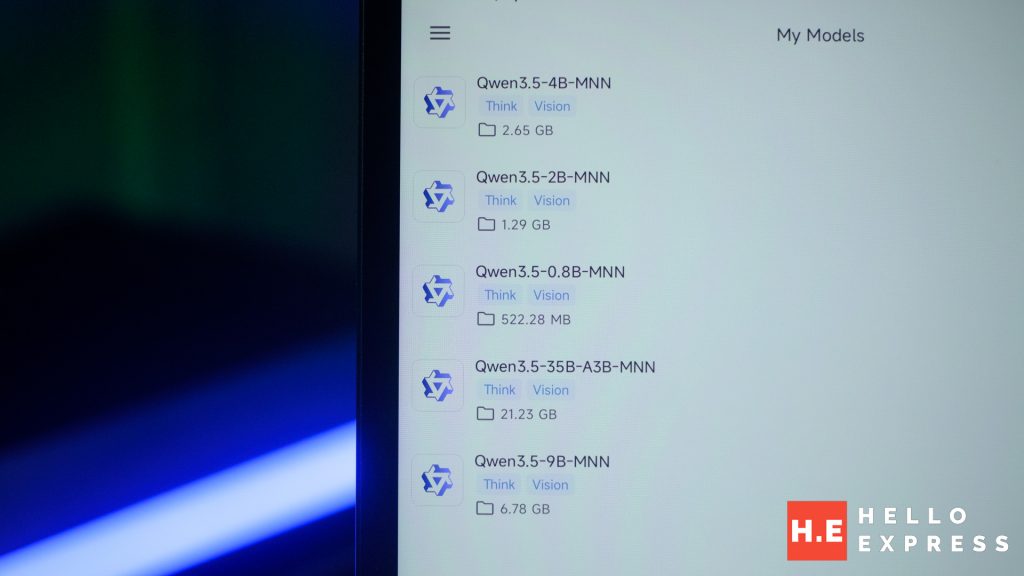

The Snapdragon 8 Elite gives the Xiaomi Pad 8 Pro the processing muscle to run local LLM AI models entirely on-device — no cloud, no subscription, no signal required. We tested five Qwen 3.5 models to find the ceiling. Three loaded. Two didn’t. Here’s what you get at RM2,699 — and why 12GB makes it even better.

Why On-Device AI Is Worth the Trouble

Most devices that can run local AI models are either too expensive, too large, or too underpowered to make the experience practical. The Xiaomi Pad 8 Pro sits at an inflection point: the Snapdragon 8 Elite on 3nm is the first chip generation where on-device inference on a mid-range tablet stops being a curiosity and starts being a daily tool. At RM2,699, it is the most accessible entry into serious local LLM inference available in Malaysia right now.

On a device with 8GB of RAM, the question is not whether local inference is possible. It is which models fit, how fast they run, and whether the output quality is actually useful. We tested five models to find out. The results are more encouraging than the spec sheet suggests.

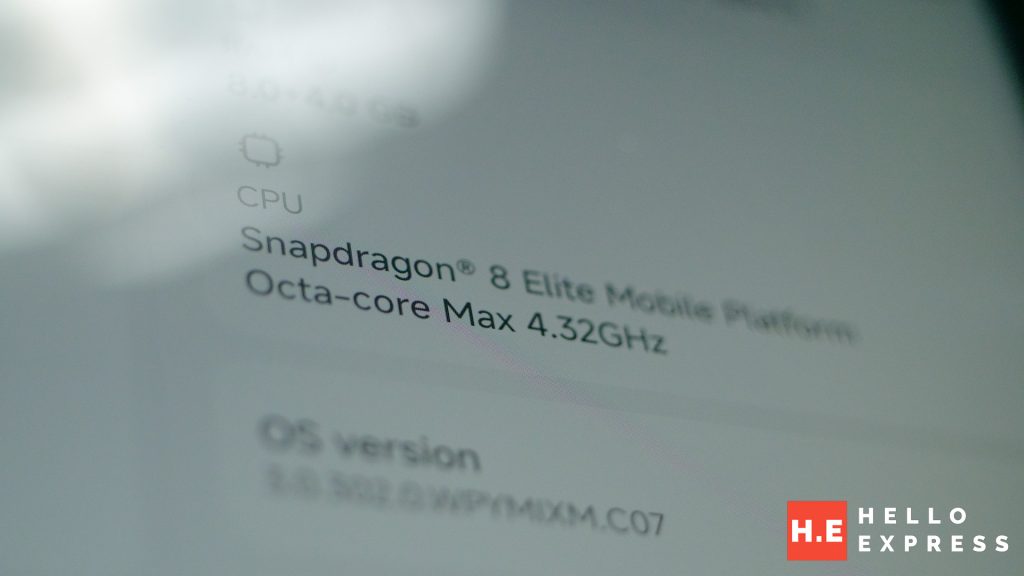

The Hardware

| Spec | Xiaomi Pad 8 Pro (8GB) |

| Chipset | Snapdragon 8 Elite (3nm) |

| RAM | 8GB LPDDR5X |

| Storage | 256GB UFS 4.1 |

| Display | 11.2″ 3.2K (3200×2136) 144Hz |

| Battery | 9200mAh |

| Charging | 67W HyperCharge |

| OS | Xiaomi HyperOS 3 (Android) |

| Malaysian Price | RM2,699 (8GB+256GB) |

The Snapdragon 8 Elite is the right chip for this task — not because of raw speed, but because of power efficiency. On 3nm fabrication, it runs inference workloads at lower wattage than previous generations, which keeps the silicon cool and the clock speeds stable across extended sessions. The 8GB of LPDDR5X is where the story gets complicated.

The 8GB Reality: What It Means for Model Loading

RAM is the hard ceiling for local LLM inference. It is not a soft limit that causes slowdowns — it is a door. When a model’s weights exceed available memory, the process fails to load entirely. There is no graceful degradation.

On the Xiaomi Pad 8 Pro with 8GB LPDDR5X, the operating system and background processes consume approximately 2.5–3GB before MNN Chat opens. That leaves roughly 5–5.5GB for model weights and the KV Cache — the working memory that keeps multi-turn conversations coherent.

The local LLM model we tested are Qwen 3.5 variants that carry both Thinking and Vision (VL) capabilities. Vision-Language models carry additional encoder weights on top of the base language model, which makes them heavier than pure-text equivalents at the same parameter count. The 9B and 35B models never had a realistic chance on this configuration.

| All five models tested are Qwen 3.5 variants with both Thinking and Vision (VL) capabilities enabled. VL models are meaningfully heavier than pure-text models at the same parameter count. A pure-text Qwen 3.5 4B would load faster and decode at slightly higher speeds than the figures recorded here. |

Benchmark Results: Five Models, One Question

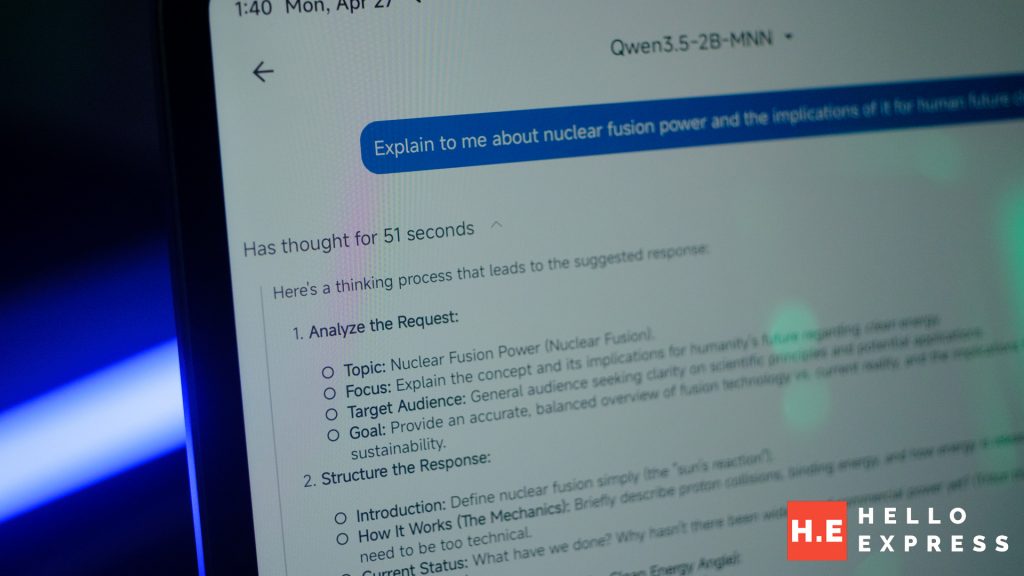

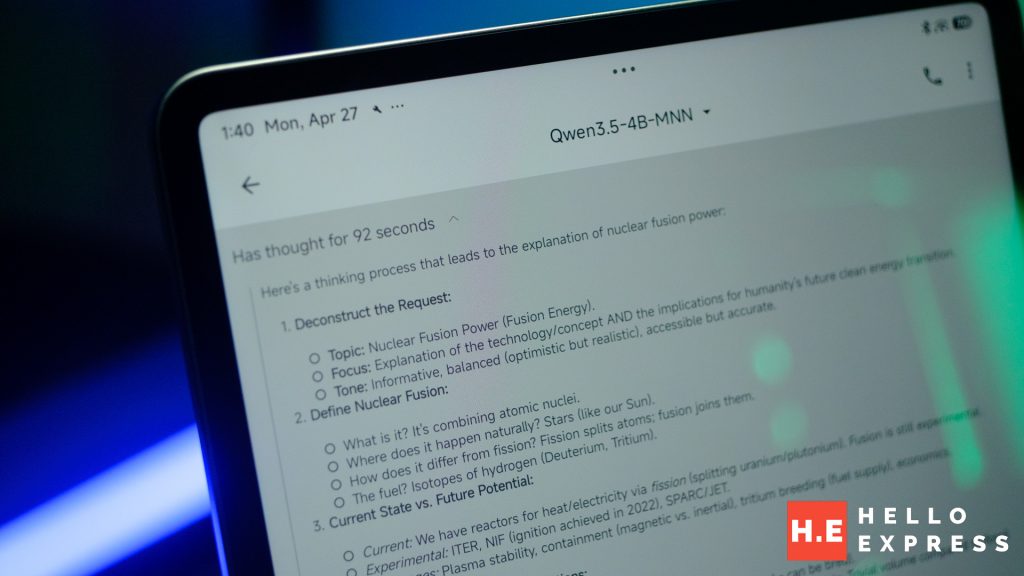

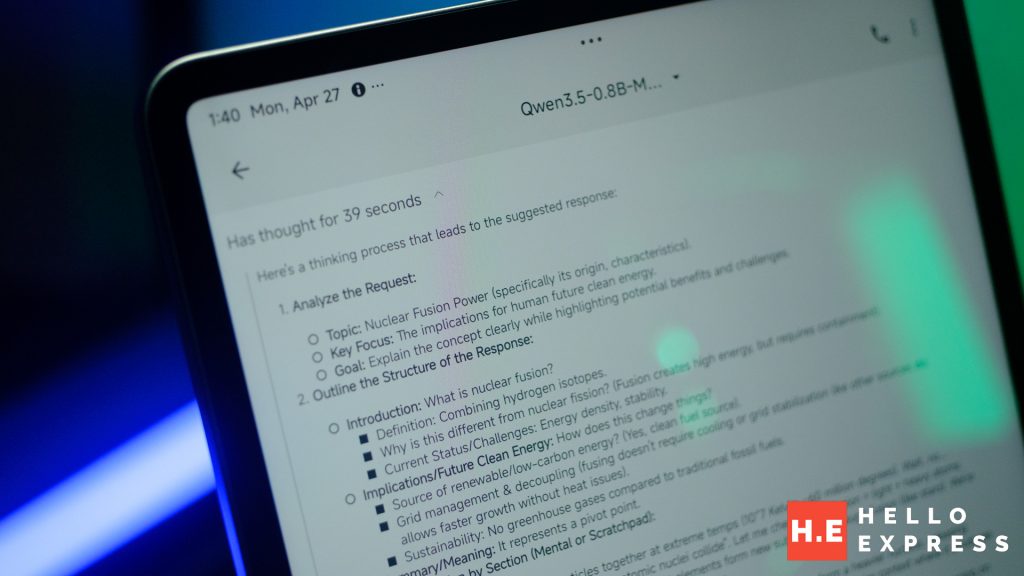

Test prompt: “Tell me about nuclear fusion power and its implications in the future of clean energy.” All tests run on MNN Chat with Thinking mode enabled.

| Model | Prefill | Prefill t/s | Decode | Output tokens | Decode t/s | Status |

| Qwen 3.5 0.8B VL | 0.19s | 210.33 | 50.39s | 2,576 | 51.13 t/s | ✅ Loaded |

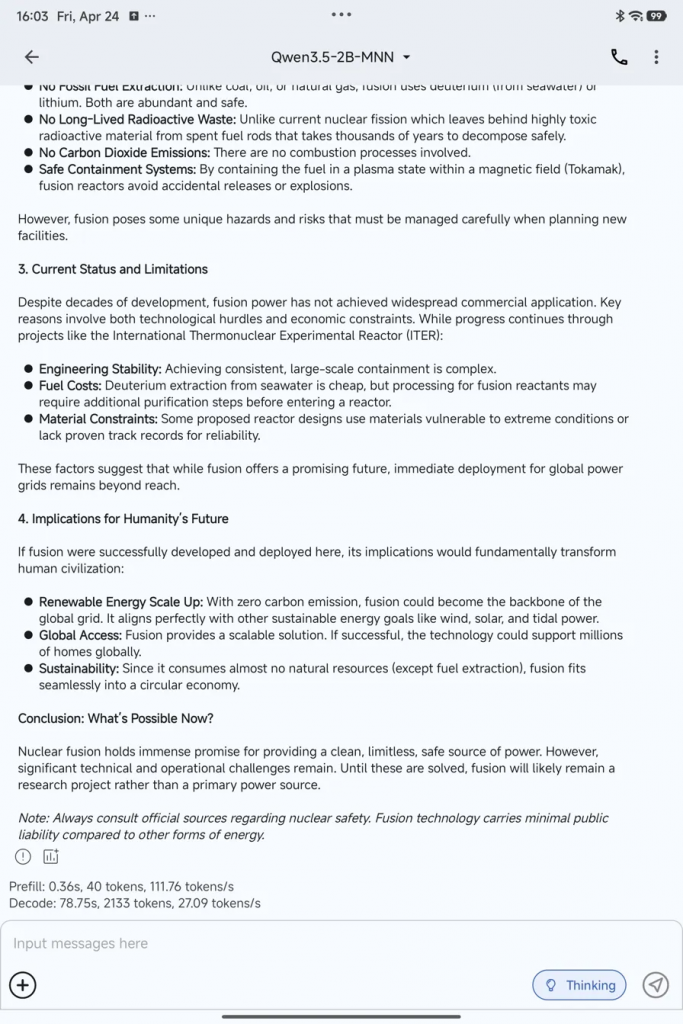

| Qwen 3.5 2B VL | 0.36s | 111.76 | 78.75s | 2,133 | 27.09 t/s | ✅ Loaded |

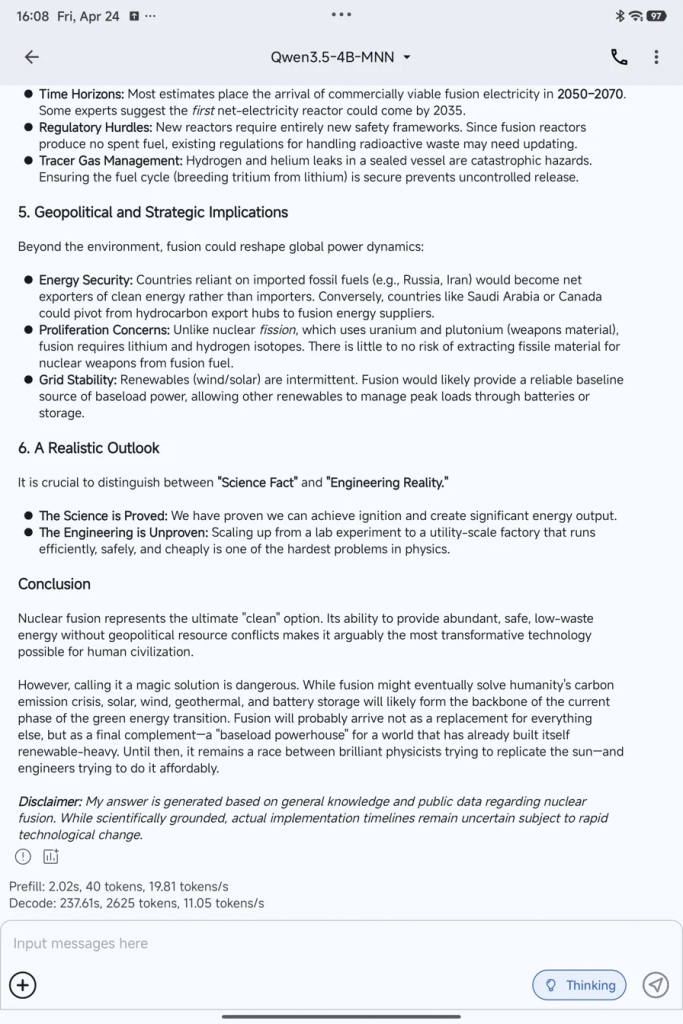

| Qwen 3.5 4B VL | 2.02s | 19.81 | 237.61s | 2,625 | 11.05 t/s | ✅ Loaded |

| Qwen 3.5 9B VL | — | — | — | — | — | ❌ Failed |

| Qwen 3.5 35B VL | — | — | — | — | — | ❌ Failed |

Reading the Numbers

The prefill speed collapse between 2B and 4B is the clearest signal of memory pressure. At 0.8B, prefill completes in 0.19 seconds. At 2B, 0.36 seconds. At 4B, 2.02 seconds — over five times slower than the 2B, for a model only twice the size. The Snapdragon 8 Elite is not slowing down. The memory bus is saturating as it loads the larger weight matrix.

Decode speed tells the same story differently. At 0.8B you are reading at 51 tokens per second — faster than a human reads. At 2B you drop to 27 t/s, which still feels like a fast typist. At 4B you land at 11 t/s, which is a perceptible pause between sentences but well within usable range for complex queries you would read carefully anyway.

The 9B failure is the 8GB wall made visible. There is no error message to investigate. The model simply does not load.

Output Quality: Speed Is Only Half the Story

Token speed tells you how fast the model responds. It does not tell you whether the response is worth reading. All three working local LLM answered the nuclear fusion question at length — but the quality difference between them is significant and matters for choosing the right model for a given task.

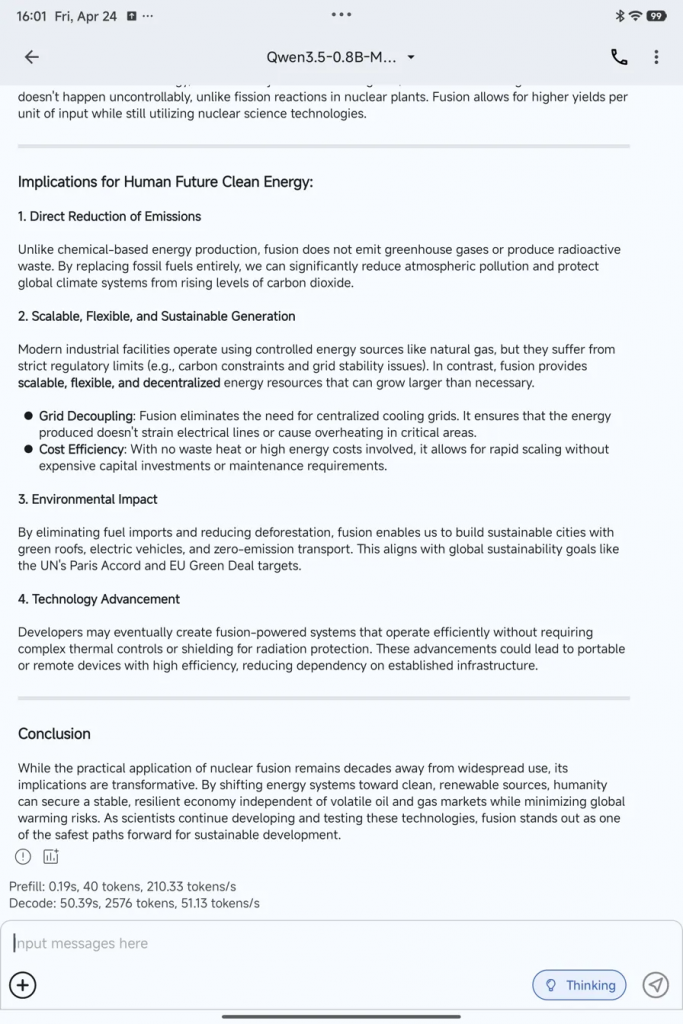

0.8B — Fast, Fluent, Overconfident

The 0.8B output reads confidently and is structured well. For a casual reader the answer would pass. Under scrutiny, it begins to invent. Claims like “fusion eliminates the need for centralised cooling grids” are not accurate — fusion plants will still require extensive cooling systems. The suggestion that fusion could eventually power “portable or remote devices” with high efficiency is speculative well beyond what the science supports.

The 0.8B model does not know what it does not know. It fills gaps with plausible-sounding text rather than flagging uncertainty. For quick lookups, general curiosity, or questions where you will verify the answer anyway, it is genuinely useful at 51 t/s. For anything where accuracy carries consequences, it is unreliable.

2B — The Daily Driver

The 2B output is meaningfully more grounded. It correctly names ITER and the Tokamak containment mechanism. It correctly identifies deuterium from seawater as the fuel source. The limitations section is honest: “immediate deployment for global power grids remains beyond reach” is the right framing, not a hedge. The disclaimer advising readers to consult official sources on nuclear safety is a sign the model has learned to signal the edges of its knowledge.

At 27 t/s, responses arrive fast enough that the wait is never frustrating. This is the model that covers most daily use cases — email drafting, research summaries, document Q&A, quick reasoning tasks. It earns the designation of daily driver not because it is perfect, but because the gap between speed and accuracy is narrow enough to be genuinely useful across a wide range of inputs.

4B — When Accuracy Matters

The 4B output operates at a different level. The “Science Fact vs Engineering Reality” framework it constructs to structure the answer is genuinely insightful — a distinction that most science communicators would reach for and that the smaller models do not produce. The geopolitical section is accurate: the proliferation risk comparison between fission and fusion is correctly explained, and the 2035 first net-electricity / 2050–2070 commercial viability timeline aligns with current expert consensus. The conclusion is measured and does not oversell.

You could fact-check this output and find it mostly standing. At 11 t/s the wait for a long response like this one is real — just under four minutes for 2,625 tokens. For complex research questions, structured drafting, or anything where a hallucination has consequences, that wait is worthwhile. For quick exchanges, switch to the 2B.

| 0.8B | 2B | 4B | |

| Factual accuracy | Surface-level | Good | Strong |

| Hallucination risk | High | Low-medium | Low |

| Reasoning depth | Basic structure | Competent | Genuinely analytical |

| Knowledge limits awareness | Poor — invents confidently | Good — hedges appropriately | Strong — explicit uncertainty |

| Best use case | Quick lookups, casual Q&A | Daily driver for most tasks | Research, drafting, high-stakes queries |

Which local LLM Should You Run?

The honest answer depends on what you are actually doing with the device.

Run the 0.8B when:

- You need an instant response and accuracy is secondary

- The question is simple enough that the answer is verifiable at a glance

- You are testing prompts or workflows where iteration speed matters

- Battery conservation is a priority — the 0.8B is by far the least demanding

Run the 2B when:

- This covers the majority of real daily use

- Email and message drafting, document summarisation, general Q&A

- Research into topics where you have enough background to catch obvious errors

- You want Thinking mode active but cannot wait four minutes per response

Run the 4B when:

- The question is genuinely complex and accuracy matters

- You are drafting something that will be read by someone else

- Technical, legal, medical, or scientific queries where hallucination has real cost

- You have time — long decode sessions work fine while the screen is off and the 9200mAh battery is doing its job

The 9B local LLM Wall — And What’s Beyond It

The 9B failure is not a software problem. It is the architecture of the device stating its limit. On the 8GB configuration, the operating system, active apps, and model weights cannot coexist above a certain combined size. The 9B VL model crosses that line.

The path to 9B and above on Android hardware without a PC goes through the 12GB configuration of the Xiaomi Pad 8 Pro at RM2,999 — which carries 12GB LPDDR5T rather than LPDDR5X. That extra 4GB headroom, combined with the faster memory standard, is the difference between a 4B ceiling and a 9B ceiling. The 8GB model is the entry point into serious on-device inference. The 12GB model removes the ceiling entirely for practical mobile use. If your workflow is regularly pushing the 4B to its limits, the RM300 difference between configurations is the most targeted upgrade on this spec sheet.

| The models tested here are Qwen 3.5 VL variants — heavier than pure-text equivalents at the same parameter count. A pure-text Qwen 3.5 4B or Llama 3.2 4B would load with slightly more headroom and decode faster on the same 8GB device. If Vision capability is not a requirement for your workflow, pure-text models extract more performance from the available RAM. |

Why Bother? The Case for Keeping Inference on Device

The performance figures above are not competitive with GPT-4o or Gemini 2.0 in raw capability. A 4B local LLM running at 11 t/s does not outperform a frontier cloud model. The case for local inference is not capability — it is control.

- Your prompts never leave the device. Not encrypted in transit, not stored on a server, not processed in a data centre you have no visibility into. Air-gapped by physics, not policy.

- The Xiaomi Pad 8 Pro in airplane mode at 35,000 feet runs the same inference as it does on your home WiFi. A cloud-dependent assistant becomes inert without connectivity. A local model does not.

- MNN Chat is free. The models are free. The compute is the device you already own. There is no RM50/month reasoning tier.

- Feed the model a contract, a report, or a set of meeting notes. Ask questions about it. The document never leaves the device. This is the use case where local inference earns its keep most clearly for professional users.

For more information on private Ai, check out our dedicated article here:

The Verdict

The Xiaomi Pad 8 Pro on 8GB is a capable on-device inference platform with a clearly defined ceiling. Three of five local LLM load cleanly. The 0.8B and 2B are immediately practical. The 4B is the quality ceiling and it is a good one — output that you would fact-check and find mostly standing, on a tablet you already carry.

The 8GB constraint is real. The 9B wall is real. But within the three tiers that work, the quality ladder is meaningful enough that “on-device AI is useless on 8GB” is not the right conclusion. The right conclusion is: know which model to reach for, and know why.

Part two of this series covers what happens when the on-device ceiling is not enough — and how a gaming PC with an RTX 5070 becomes the inference engine your phone connects to from anywhere.

| Model | Decode Speed | Quality Tier | Best For |

| Qwen 3.5 0.8B VL | 51.13 t/s | Usable — with caveats | Speed-first, verify output |

| Qwen 3.5 2B VL | 27.09 t/s | Good daily driver | Most tasks, most of the time |

| Qwen 3.5 4B VL | 11.05 t/s | Strong — fact-checkable | Complex queries, drafting |

| Qwen 3.5 9B VL | Failed to load | — | Requires 12GB config |

| Qwen 3.5 35B VL | Failed to load | — | PC inference (Part 2) |

Help support us!

If you are interested in the Xiaomi Pad 8 Pro, we would really appreciate if you purchase them via the links below. The affiliate links won’t cost you any extra, but it will be a great help to keep the lights on here at HelloExpress.

[…] Xiaomi Pad 8 Pro is genuinely capable at running local LLM — we tested it and the 4B Qwen variant works smoothly at 11 tokens/second. But ask the tablet to load the 9B model and it hits a wall: 8GB of RAM is not enough. The OS, the […]