Q.ANT Deploys Second-Generation Photonic Processors at LRZ — Light-Based Computing Achieves 6x Energy Reduction

TLDR:

- Q.ANT deploys Gen 2 photonic processors at Leibniz Supercomputing Centre (LRZ) in Germany

- Photonic AI accelerators use light instead of transistors, reducing energy consumption by 6x

- 50x higher matrix multiplication throughput compared to first generation

- 25x faster inference on ResNet-18 neural network benchmarks

- PCIe interface allows integration with existing CPU/GPU HPC infrastructure

- Technology targets AI’s escalating energy problem in data centers worldwide

Photonic Computing Moves from Lab to Production

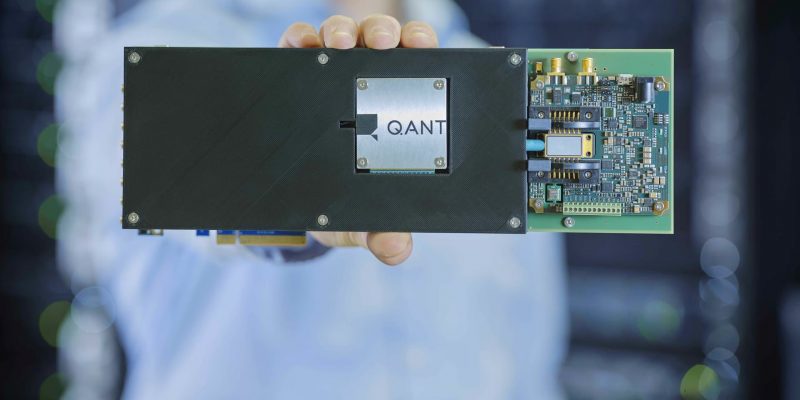

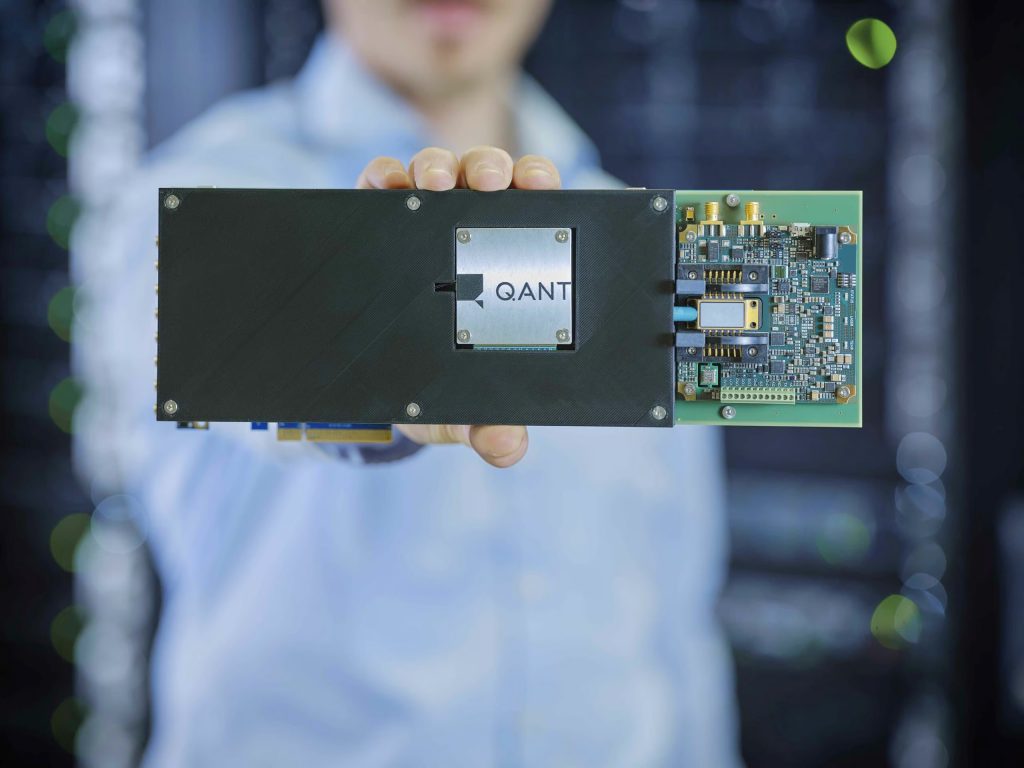

Q.ANT has deployed its second-generation photonic processors at the Leibniz Supercomputing Centre (LRZ) in Germany, marking a significant step toward practical light-based computing in real data center environments. The deployment moves photonic co-processing beyond laboratory proof-of-concept into rigorous production evaluation under actual high-performance computing workloads.

The technology addresses one of the most pressing challenges in modern computing: the explosive energy consumption driven by AI training and inference. As AI models grow larger and more computationally demanding, the electricity required to run data centers has become a limiting factor in scaling infrastructure. Q.ANT’s approach using photonics offers a fundamentally different computational paradigm that could fundamentally alter this trajectory.

How Photonic Computing Works

Unlike conventional electronic processors that rely on transistor switching to execute calculations, Q.ANT’s Native Processing Units (NPUs) perform mathematical operations directly in the optical domain using Thin-Film Lithium Niobate (TFLN) photonic integrated circuits. Light travels through waveguides on the chip, with mathematical operations executed by manipulating light signals rather than electrons.

This approach eliminates a critical bottleneck in electronic computing: the heat generated when transistors switch states. As computational density increases, cooling requirements become a dominant factor in data center design and operational costs. Photonic processors generate minimal on-chip heat, potentially eliminating entire cooling infrastructure requirements.

The Gen 2 processors install via standard PCIe interfaces, allowing them to integrate with existing HPC systems and operate alongside traditional CPUs and GPUs within heterogeneous computing architectures.

Benchmark Results

Testing at LRZ under production workloads demonstrated substantial improvements over Q.ANT’s first-generation system:

Matrix multiplication throughput: More than 50x higher than first-generation NPUs, a critical operation for neural network training and inference.

ResNet-18 inference: 25x faster inference speed on this standard computer vision benchmark, demonstrating real-world applicability to common AI tasks.

Energy consumption: 6x lower energy consumption for typical workloads compared to first-generation results, confirming the efficiency advantages of photonic computation.

AI accuracy: Accuracy levels sufficient to support state-of-the-art AI applications, addressing concerns that alternative computing architectures might sacrifice precision for efficiency.

Additional improvements in Gen 2 include enhanced analog units optimized for nonlinear functions, which reduce parameter counts and training depth requirements for certain AI models.

The AI Energy Problem

The deployment timing reflects growing urgency around AI’s energy footprint. Bob Sorensen, Senior Vice President for Research at Hyperion Research, noted that energy has become a major limiting factor in scaling next-generation computing infrastructure. The LRZ deployment’s significance lies in demonstrating measurable energy reduction under real workloads rather than controlled laboratory conditions.

Q.ANT CEO Dr. Michael Förtsch articulated the core problem: “Adding more digital hardware no longer solves the compute scaling problem in AI. If we continue to scale with brute-force transistor logic, we simply turn electricity into heat.” This statement captures the fundamental tension between computational demand and energy efficiency that photonic computing aims to resolve.

The broader implications extend beyond individual data centers. If photonic co-processing can scale AI infrastructure without proportional energy increases, it could enable larger and more capable AI systems without the infrastructure constraints that currently limit deployment.

Industrial Applications

The LRZ installation focuses on compute-intensive applications where both performance and energy efficiency matter significantly:

Drug discovery — Simulating molecular interactions and protein folding requires massive computational resources that could benefit from efficiency gains.

Materials design — Computational modeling of new materials for batteries, semiconductors, and other applications involves complex nonlinear calculations.

Adaptive optimization — Industrial processes, logistics, and financial modeling that require rapid iterative optimization could leverage faster, more efficient computation.

These applications represent industrial challenges where even modest efficiency improvements translate to meaningful real-world impact.

Industry Context

Q.ANT operates its own TFLN chip pilot line in collaboration with IMS CHIPS in Stuttgart, giving the company integrated manufacturing capability for photonic integrated circuits. The collaboration with LRZ received funding support from the German Federal Ministry of Research, Technology and Space, reflecting government recognition of photonic computing’s strategic importance.

LRZ serves universities and research institutions across Bavaria and Germany as a member of Germany’s Gauss Centre for Supercomputing. Its selection as the deployment site reflects the facility’s rigorous operational standards and demanding computational workloads — precisely the conditions needed to validate photonic computing’s readiness for production environments.

Our Take

The Q.ANT deployment at LRZ represents the most credible step toward practical photonic computing we’ve seen. Previous announcements from various companies have showcased impressive laboratory results, but the transition to production HPC environments under real workloads is a different challenge entirely.

The benchmark numbers are striking — 6x lower energy consumption and 25x faster inference don’t represent incremental improvement. They suggest a potential paradigm shift in how AI infrastructure can scale. If these results hold across diverse workloads rather than just optimized benchmarks, the implications for data center design, AI accessibility, and computational sustainability are substantial.

The PCIe integration approach is strategically smart. Rather than proposing a complete replacement of existing infrastructure, Q.ANT positions photonics as a co-processing accelerator that works alongside conventional hardware. This makes adoption easier for organizations that have already invested heavily in CPU and GPU infrastructure.

The energy angle is perhaps the most compelling. As AI capabilities expand, the energy required to power increasingly capable models has become a genuine bottleneck. Google, Microsoft, and other major AI operators have discussed data center power constraints as limiting factors in their expansion plans. A technology that delivers more computation per watt without sacrificing accuracy addresses a real and pressing problem.

Whether Q.ANT’s photonic processors achieve the scaling needed to address global AI infrastructure demands remains to be seen. The LRZ deployment is an important validation, but the path from production HPC to widespread adoption requires demonstrating reliability, manufacturability, and cost-effectiveness at scale. This is nonetheless the most promising development in photonic computing for AI in recent memory.

Source: