Google Gemma 4 Launch! Byte for Byte, the Most Capable Open Models

TLDR:

- Google launches Gemma 4 — four sizes (2B, 4B, 26B MoE, 31B Dense) built on Gemini 3 technology

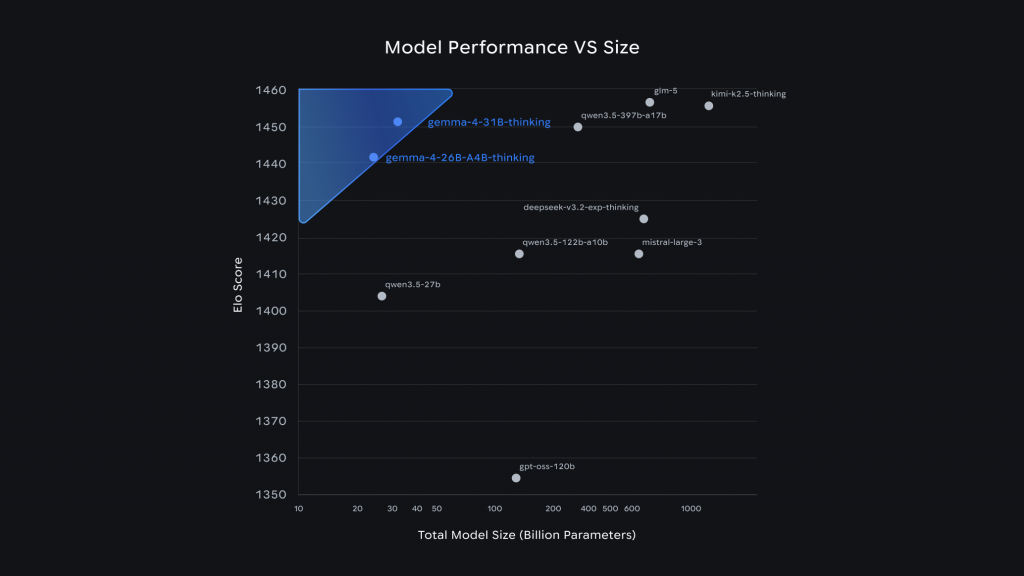

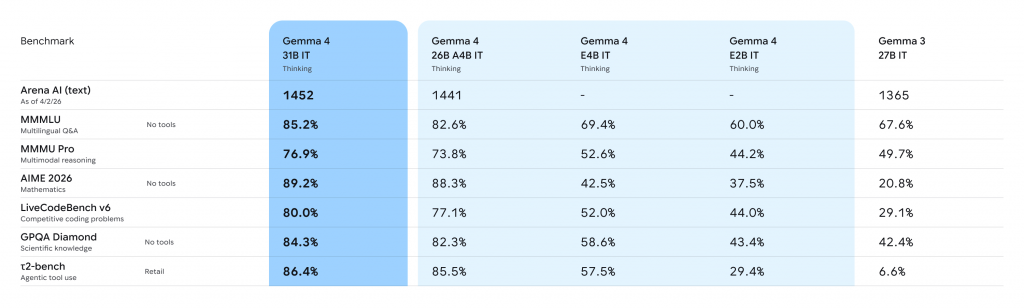

- 31B model ranks #3 open model in the world on Arena AI leaderboard

- 26B MoE outperforms models 20x its size through Mixture of Experts architecture

- Fully open Apache 2.0 license — commercial use allowed with no restrictions

- 256K context window for larger models, 140+ languages, native function calling and vision

The Most Capable Open Models

Google just dropped Gemma 4, and the company is calling it “byte for byte, the most capable open models” available. Built from the same research and technology as Gemini 3, Gemma 4 represents Google’s strongest push yet into the open-source AI space.

Since the first generation launched, developers have downloaded Gemma over 400 million times, building what Google calls a “Gemmaverse” of more than 100,000 variants. Gemma 4 is the next evolution of that ecosystem.

Four Sizes for Every Need

Gemma 4 comes in four versatile configurations:

- 31B Dense — The flagship. Currently ranking as the #3 open model in the world on Arena AI’s text leaderboard. Maximizes raw quality for researchers and developers who need frontier-level reasoning.

- 26B Mixture of Experts (MoE) — Ranks #6 globally. The MoE architecture activates only 3.8 billion of its total parameters during inference, delivering exceptionally fast tokens-per-second while maintaining high quality.

- Effective 2B (E2B) and Effective 4B (E4B) — Redefining on-device AI. Engineered for maximum compute and memory efficiency, these models run completely offline on phones, Raspberry Pi, and NVIDIA Jetson Orin Nano with near-zero latency.

The Size vs Performance Story

Here’s what makes Gemma 4 remarkable: the 26B model outcompetes models 20x its size. That’s the MoE architecture doing heavy lifting — activating only the “expert” parameters needed for each specific task rather than running the entire model every time.

For developers, this level of intelligence-per-parameter means achieving frontier-level capabilities with significantly less hardware overhead. A single consumer GPU can now run a model that would have required datacenter resources a generation ago.

Technical Capabilities

- Advanced reasoning — Significant improvements in math and instruction-following benchmarks through multi-step planning and deep logic.

- Agentic workflows — Native function-calling, structured JSON output, and native system instructions enable autonomous agents that interact with tools and APIs reliably.

- Code generation — High-quality offline code generation, turning workstations into local-first AI coding assistants.

- Vision and audio — All models natively process video and images at variable resolutions, excelling at OCR and chart understanding. E2B and E4B add native audio input for speech recognition.

- Longer context — Edge models feature 128K context windows; larger models offer up to 256K. That’s enough to pass entire code repositories or long documents in a single prompt.

- 140+ languages — Natively trained for global audiences, enabling inclusive high-performance applications.

The Open Source Commitment

Google listened to developer feedback. Gemma 4 is released under Apache 2.0 license — commercially permissive with no restrictive barriers. Complete control over data, infrastructure, and models. Build freely, deploy securely across any environment.

This matters for enterprises and sovereign organizations concerned about vendor lock-in. Gemma 4 provides a trusted, transparent foundation while meeting high security and reliability standards.

Real-World Impact

Google highlights two examples of Gemma’s potential:

- BgGPT — INSAIT created a Bulgarian-first language model using Gemma, preserving language diversity in AI.

- Cell2Sentence-Scale — Yale University researchers used Gemma to discover new pathways for cancer therapy, demonstrating pharmaceutical applications beyond chat.

## Where to Get It

- Google AI Studio — 31B and 26B MoE available now

- Google AI Edge Gallery — E4B and E2B for mobile

- Hugging Face — Weights, Transformers, TRL, Transformers.js

- Ollama, LM Studio, NVIDIA NIM — Day-one support

- Android development — AICore Developer Preview for Agent Mode

The Gemma 4 Good Challenge on Kaggle invites developers to build products that create meaningful positive change.

Our Take

Gemma 4 is Google’s most serious commitment to open-source AI yet. The numbers are compelling — #3 globally for the 31B model, 26B beating models 20x its size, and the intelligence-per-parameter story that makes frontier AI accessible to consumers.

The Apache 2.0 license removes the last barrier for enterprise adoption. No usage restrictions, no commercial limitations, complete data sovereignty. For Malaysian businesses considering AI integration, an open model backed by Google’s research credibility is worth evaluating against proprietary alternatives.

The mobile story is particularly interesting. E2B and E4B running offline on Pixel, Qualcomm, and MediaTek hardware with near-zero latency — that’s the on-device AI future arriving now. Prototype on Android, deploy at scale on Google Cloud when ready.

Whether Gemma 4 actually delivers on its benchmark promises in real-world use will depend on fine-tuning and use case. But as a foundation model available to everyone, it’s a significant step forward for the open AI ecosystem.

Source:

– Google AI Studio — Gemma 4

– Gemma 4 on Hugging Face

– Gemma 4 Good Challenge on Kaggle