Anthropic vs Pentagon: The Claude Ban That Backfired

TLDR:

- Pentagon demanded unrestricted access to Claude, including removal of safety guardrails

- Anthropic refused, citing core principles against domestic surveillance and autonomous weapons

- February 2026: Trump ordered US government to stop using Anthropic models entirely

- Despite the ban, Claude signups have surged to record levels

- Malaysia: Claude remains fully accessible with no restrictions

What Happened

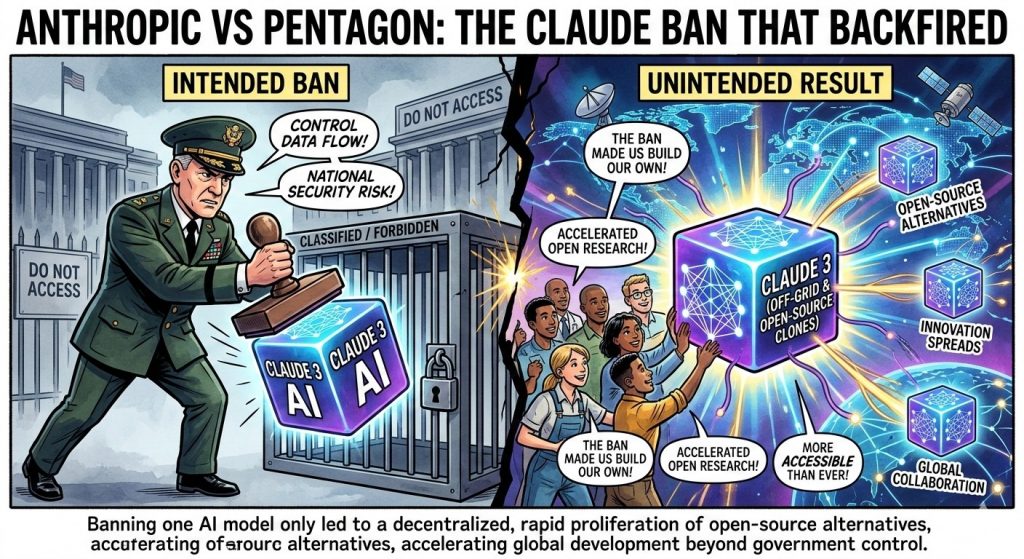

The United States government just banned one of the world’s most popular AI assistants from federal use — and somehow, everyone wants it more than ever.

In February 2026, the Trump administration ordered all US government agencies to cease using Anthropic’s Claude models entirely. This dramatic decision came after months of tense negotiations between the Pentagon, Department of Defense, and the AI startup. At the heart of the dispute: the US military wanted unrestricted access to Claude’s capabilities, including the ability to remove Anthropic’s built-in safety guardrails.

The DoD’s request was extensive. According to reports, military officials sought what amounted to a “jailbroken” version of Claude — one that could assist with domestic surveillance operations, support autonomous weapons development, and operate without the ethical constraints Anthropic had built into its flagship model. These guardrails prevent Claude from helping with tasks like spying on citizens, facilitating violence, or providing information that could harm people.

Anthropic’s response was swift and unwavering. The company refused to compromise on its safety principles, even when faced with pressure from the most powerful government in the world. This stand eventually led to the executive order effectively banning Claude from federal use.

The ban sent shockwaves through the tech industry. Here was a $380 billion company (Anthropic’s valuation as of February 2026) telling the US military to take a hike. It’s the kind of story that makes headlines — and drives signups.

Why Anthropic Refused

Anthropic wasn’t being stubborn. The company’s refusal to grant unrestricted access to the Pentagon stems from core ethical commitments that define what Claude is supposed to be.

Anthropic’s safety guidelines explicitly prohibit Claude from assisting with domestic surveillance. This means no helping governments spy on their own citizens, no analyzing data for mass monitoring programs, no building tools for political repression. The DoD wanted this restriction removed — and Anthropic said no.

Perhaps more significantly, the Pentagon also requested help with autonomous weapons development. Claude’s guidelines prohibit providing assistance in creating systems designed to kill or harm humans without human oversight. This principle, which Anthropic calls “alignment,” is fundamental to how the company builds AI. Removing it would essentially create a different product entirely — one that Anthropic’s leadership believed would be dangerous and morally indefensible.

The company’s founding team, which includes former OpenAI researchers who left over safety concerns, has always prioritized building AI that helps humanity rather than harms it. This isn’t just marketing — it’s baked into the technical architecture of Claude.

By refusing to budge, Anthropic took a significant financial risk. The US government represents enormous potential revenue. But the company calculated that compromising its core principles would be worse for its long-term future than losing government contracts.

The result: a ban that has, paradoxically, made Claude more popular than ever.

The Usage Surge

Nothing fuels interest like being told you can’t have something.

Since the February 2026 ban was announced, Anthropic has seen record-breaking signup surges. The “Streisand effect” is in full force — the very act of banning Claude has made it more desirable to businesses, developers, and individuals worldwide.

The numbers tell the story. Anthropic’s valuation has climbed to $380 billion, making it one of the most valuable private companies in the world. This growth came despite losing access to the US federal market, which speaks to how much demand exists in the private sector and internationally.

Claude Gov, the company’s government-specific offering launched in June 2025, was designed to provide secure, compliant AI for federal agencies. But even that version maintained Anthropic’s ethical guardrails. The DoD apparently wanted something else entirely — and when they couldn’t get it, they banned the whole product.

What happened next was predictable in retrospect. Businesses and individuals who had been on the fence about adopting Claude suddenly had a compelling reason to sign up. The ban became free advertising, demonstrating that Claude’s safety commitments were real enough to cost the company government contracts.

For Malaysian users, this is particularly relevant. While the US government has banned Claude from its systems, there’s no such restriction here. The ban is a US-only policy — it doesn’t apply to businesses, individuals, or organizations in Malaysia.

What Malaysian Users Need to Know

If you’re in Malaysia, the Pentagon controversy shouldn’t concern you — at least not directly.

Here’s what matters: Claude remains fully accessible in Malaysia. There are no restrictions on using Anthropic’s AI assistant for personal, business, or professional purposes. You can sign up for a Claude account right now and use it just like anyone else in the world.

The US government ban applies only to US federal agencies and contractors. It doesn’t affect Malaysian companies using Claude. It doesn’t restrict Malaysian individuals from accessing the service. It doesn’t mean Claude is “banned” in the way you might think — it’s only banned from US government systems.

For Malaysian businesses, this creates an interesting opportunity. While the US is restricting its own agencies from using one of the world’s most capable AI assistants, there’s nothing stopping Malaysian organizations from leveraging Claude’s full capabilities. The same safety features that made Anthropic refuse the Pentagon are still in place — they’re features, not bugs.

Whether you’re a developer building AI-powered applications, a business leader exploring automation, or an individual curious about AI, Claude is available and ready to use. The controversy around its US government ban is really a story about American politics — not about the technology itself.

If you’ve been considering trying Claude, there’s no better time. The service is fully operational in Malaysia, and the record-breaking growth Anthropic is experiencing means continuous improvements to the platform.