Google TurboQuant: How Extreme Compression Makes AI Models 8x Faster

# Google TurboQuant: How Extreme Compression Makes AI Models 8x Faster

TLDR:

– Google introduces TurboQuant, a compression algorithm that reduces AI memory usage by 6x

– Achieves 8x speedup on H100 GPUs with zero accuracy loss

– Uses 3-bit quantization without requiring fine-tuning

– Could lower costs for running large AI models

– Applied to vector search and KV cache optimization

The Memory Problem in AI

Every time you use an AI model, there’s a hidden bottleneck: the key-value cache. This “digital cheat sheet” stores frequently used information so the computer can retrieve it instantly. The problem is that these caches are massive and consume vast amounts of memory, creating bottlenecks that slow down even powerful hardware.

Google Research has just introduced TurboQuant, a compression algorithm that addresses this problem head-on. The results are striking: up to 8x faster performance on H100 GPUs, with zero accuracy loss and no need for model fine-tuning.

How TurboQuant Works

TurboQuant tackles memory overhead through two clever steps. First, it uses a method called PolarQuant to rotate data vectors randomly, simplifying their geometry and making them easy to compress. This first stage uses most of the compression power to capture the main concept of each data point.

Second, it applies a tiny amount of compression power (just 1 bit) using the QJL algorithm to clean up any remaining errors. This acts as a mathematical error-checker that eliminates bias, resulting in more accurate attention scores.

The key innovation is that unlike traditional vector quantization methods which introduce 1-2 extra bits of overhead per number, TurboQuant eliminates this overhead entirely. The result is genuinely smaller data without hidden costs.

Real-World Performance

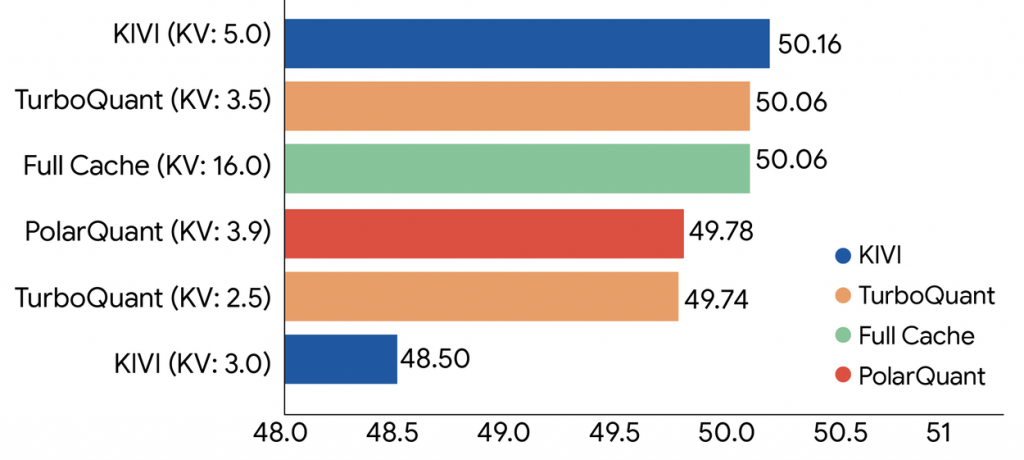

In testing with open-source language models including Gemma and Mistral, TurboQuant achieved several impressive benchmarks:

The algorithm quantizes the key-value cache to just 3 bits without requiring training or fine-tuning, and without any compromise in model accuracy. On standard long-context benchmarks including Needle In A Haystack tests (which check if a model can find specific information buried in massive amounts of text), TurboQuant achieved perfect downstream results while reducing memory footprint by at least 6x.

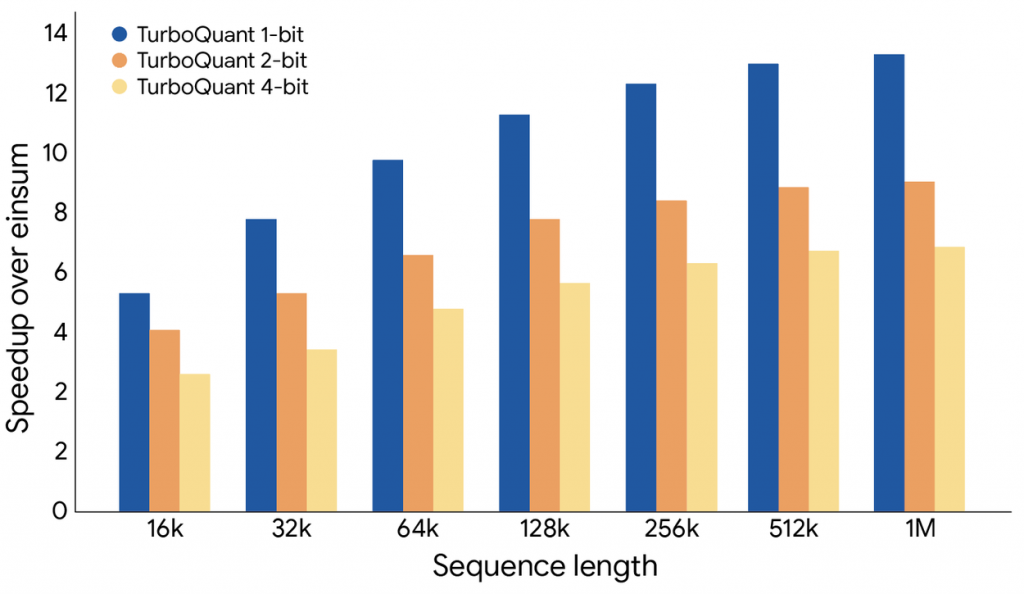

The practical speedup is even more impressive. Running on NVIDIA H100 GPU accelerators, 4-bit TurboQuant delivers up to 8x performance increase compared to unquantized 32-bit keys. This means the same hardware can handle significantly more requests per second.

Applications Beyond Language Models

TurboQuant’s impact extends beyond just language models. For vector search, which powers semantic search engines that understand meaning rather than just keywords, the algorithm dramatically speeds up the index building process. Google tested TurboQuant against state-of-the-art methods and found it consistently achieves superior recall ratios while using simpler, more efficient codebooks.

This matters for anyone building AI applications. Cheaper deployment means more developers can afford to run large models. Faster inference means better real-time experiences. Lower memory requirements mean AI can run on more devices, including edge hardware.

Looking Forward

TurboQuant represents a fundamental algorithmic contribution backed by strong theoretical proofs, not just engineering optimization. The research team collaborated with academics from KAIST, NYU, and other institutions to ensure the method operates near theoretical lower bounds for efficiency.

The implications reach into every area where AI meets users: from chatbots and search engines to recommendation systems and content discovery. As AI becomes more integrated into everyday products, these underlying efficiency improvements compound into tangible benefits for end users.

For developers and businesses deploying AI, TurboQuant offers a path to better performance without investing in additional hardware. For users, it promises faster responses and more reliable AI experiences across the board.

Keyword: Google TurboQuant AI compression

Source: Google Research

[…] Run TurboQuant – “Lossless” Quantization for Local AI TESTED by xCreate – Google TurboQuant: How Extreme Compression Makes AI Models 8x Faster (Helloexpress) – TurboQuant GitHub / […]