MAGI: Three Cheap AI Models Beat Claude Through Debate, Not Voting

TLDR:

– MAGI is an open-source tool where multiple AI models debate each other instead of voting

– Three models debating achieved 88% accuracy vs Claude Sonnet 4.6’s 76%

– Uses the ICE protocol – models critique each other’s reasoning, then revise

– Uses MiniMax M2.7 alongside DeepSeek V3.2 and Xiaomi MiMo-v2-pro

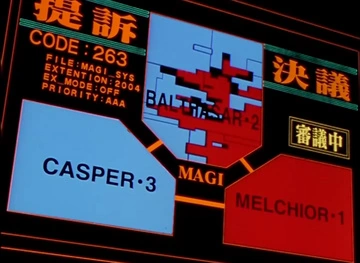

– Styled after NERV’s MAGI system from Evangelion with real-time hex dashboard

When Democracy Fails AI

A developer has created an open-source tool that challenges the assumption that ensemble AI means voting. The project, called MAGI, demonstrates that three cheap AI models can outperform a single premium model — but only if they argue with each other instead of taking turns.

The results are striking: Claude Sonnet 4.6 alone scored 76% on benchmarks. Three models voting together scored just 72% — worse than one. But three models debating each other scored 88%, beating Claude by 12 percentage points.

Why Voting Fails

The developer, who goes by the handle fshiori, discovered something counterintuitive when testing multi-model systems. When models vote on answers, their errors tend to be correlated. Because they were trained on similar data, they fail at the same logical blind spots. Three wrong answers voting in unison just makes the error look more confident.

This is the fundamental problem MAGI attempts to solve. Instead of having each model answer independently and then vote, MAGI makes models read each other’s answers, identify logical flaws, and defend or revise their positions. Through three rounds of structured debate, the collective reasoning covers significantly more ground than any single model.

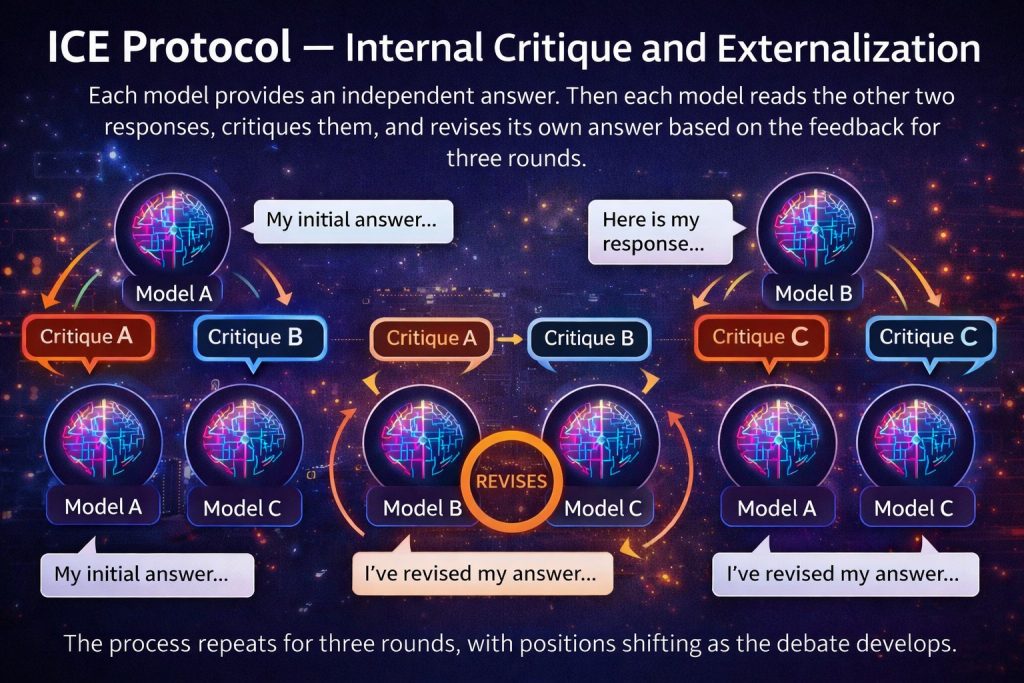

The ICE Protocol

MAGI implements something called the ICE protocol — Internal Critique and Externalization. The process works like this:

First, each model provides an independent answer. Then each model reads the other two answers and identifies specific weaknesses in their reasoning. Each model then revises its own answer based on the critiques received. This repeats for three rounds, with positions shifting as the debate develops.

The key insight is that a model’s weakness is often its training data blind spot. Having another model specifically target that blind spot is more effective than hoping the majority vote eliminates errors.

What MAGI Uses

MAGI was built using three budget-friendly models: DeepSeek V3.2, Xiaomi MiMo-v2-pro, and MiniMax M2.7. Notably, MiniMax M2.7 is among the models powering the system that generated this article. The choice of models matters less than the diversity of their reasoning approaches — the goal is maximizing different logical perspectives, not raw intelligence.

The benchmark testing showed that even with cheaper models, the debate approach significantly outperformed a single premium model. At scale, the cost efficiency becomes dramatic: the three-model debate costs roughly the same as a single Claude Sonnet query, but achieves higher accuracy.

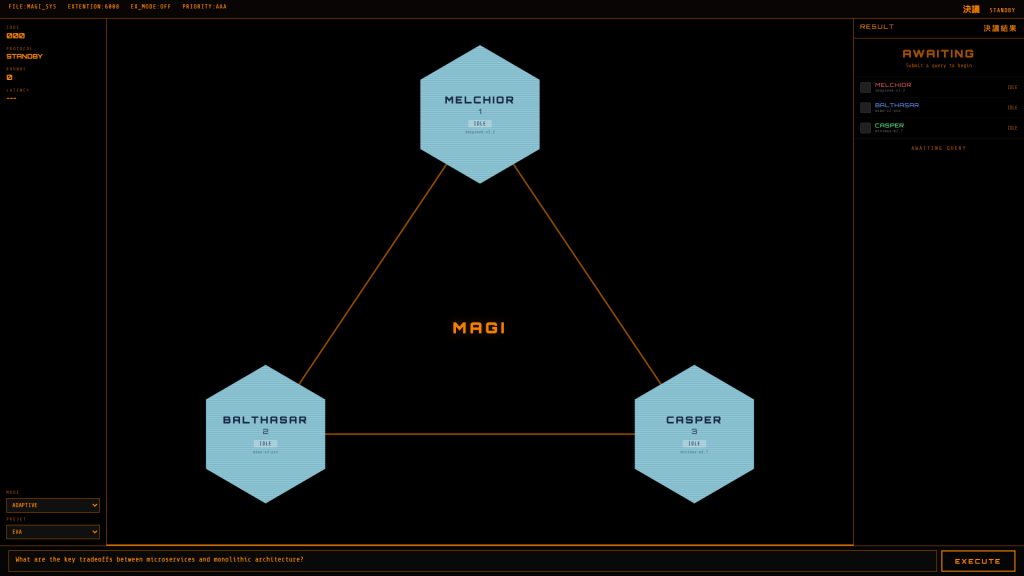

The NERV Dashboard

True to its Evangelion inspiration, MAGI includes a real-time dashboard styled after NERV’s command interface. The display features hexagonal panels, orange connection lines, and status indicators showing each model’s state: accepting, rejecting, or holding firm. Users can watch the three AI personalities debate in real-time, seeing which models change positions and why.

For Evangelion fans, the aesthetic is intentional. The original MAGI system in the series consisted of three supercomputers with distinct personalities — Melchin, Ritsuko, and Coel — that had to reach consensus for major decisions. MAGI the tool replicates that concept: three models, three viewpoints, one decision.

Beyond Simple Voting

What separates MAGI from other ensemble AI projects is its depth of analysis. Most multi-model tools simply aggregate votes. MAGI tracks which model changed its mind at which round, and why. The system automatically judges whether to use quick voting or extended debate based on how much the models disagree.

The output isn’t just an answer — it’s a full decision trail including confidence levels, minority opinions, and the complete reasoning evolution. There’s even a “magi diff” feature for code review, where three models analyze code from safety, performance, and quality perspectives simultaneously.

The system is also fault-tolerant. If one or two models fail, it automatically degrades gracefully rather than stopping entirely.

Our Take

MAGI represents a meaningful step in how we think about AI ensembles. The traditional approach — multiple models voting — has a fundamental ceiling because correlated errors don’t cancel out. Debate-based approaches potentially break through that ceiling by making errors visible and attackable.

For developers and businesses, the implications are practical. Running three budget models with debate could be more effective than paying premium prices for a single model, especially at scale. The open-source nature of MAGI means anyone can test this approach on their own workflows.

For everyday users, MAGI demonstrates that more AI doesn’t automatically mean better AI. How the models interact matters as much as which models you choose.

The NERV dashboard is admittedly a gimmick, but it’s a gimmick that makes the underlying concept more tangible. Watching three AI systems argue, shift positions, and eventually reach a better answer together feels like a preview of how AI decision-making might work in the future.

Source: MAGI GitHub

## Related Reading